Guide

Ultimate Guide to Data Cleaning with Python

![]()

Written By Jess Feldman

![]()

Written By Jess Feldman

Course Report strives to create the most trust-worthy content about coding bootcamps. Read more about Course Report’s Editorial Policy and How We Make Money.

Course Report strives to create the most trust-worthy content about coding bootcamps. Read more about Course Report’s Editorial Policy and How We Make Money.

Data cleaning is an integral component of analyzing data. But what actually goes into this important skill and which tools should you choose to clean data efficiently? Lighthouse Labs Data Science Mentor, Andrew Berry, walks us through the process of preparing data and identifying issues within a data set, and how data professionals use Python plugins like NumPy and PySpark to clean inaccurate data. Plus, get a sneak peek into how Lighthouse Labs integrates data cleaning into their Data Science Bootcamp curriculum.

Beginning on February 17th, Lighthouse Labs invites everyone to learn how to use data in their 21-Day Data Challenge! No matter your skill level, get introduced to the fundamentals of data in a fun, accessible, engaging way through daily challenges.

Data cleaning is analyzing your data to identify incompleteness or inaccuracies and then "cleaning" or correcting the data in order for the data to be useful. Other terms like data scrubbing, data validation, and data cleansing all refer to the same process. It takes a lot of common sense to clean data, but there are also tools that data professionals use for data cleaning.

No matter what field you are in – from data science to data engineering to data analysis to machine learning – when you are analyzing data, it is important to be sure the data is high quality and properly prepared. If the data is not properly cleaned, you may pick up inaccurate results. Even in machine learning, if your data is messy, the machine learning algorithms may give the wrong weights to determine its output.

It’s safe to assume that the steps to data cleaning are always going to be the same, regardless if it's now or 10-20 years from now. We may see new software that can quicken and simplify the process, but overall all data professionals should have a good grasp on the data cleaning basics.

Everyone who is a data professional will touch data cleaning at some point. The data cleaning process can take over half of a data professional’s time because it is so crucial to get it right. Even though data cleaning is a simple process, it is very time consuming to ensure that the data is correctly cleansed.

Data Cleaning for Non-Data Employees

If your company is data-driven, it's nice to know the steps of how to data clean and the rationale of how to approach data cleaning. In a small organization, you can clean data by using Excel or Google Sheets. For larger companies with legacy systems who may be switching over to new software or databases, data cleaning may mean handling large data sets. These require more manpower to clean, and non-data employees could be asked for their help with the task.

The data cleaning process begins by determining what kind of data you have and if your data is corrupted.

Corrupted data can look like missing rows, cells, or columns. To prepare the data for cleaning, you will need to fill in or remove parts of the data in the most sensible way.

Pro-Tip: Make sure you have the raw, messy data saved as a separate file! Your data cleaning should be saved as a new file. It's important to keep the original file as is in case you need to totally redo your data cleaning.

Once you have prepared your data set and it is saved as a new file, you can begin data cleaning!

Since there are so many types of data, every data set will require a customized approach to data cleaning.

When cleaning data, use common sense to inform your decision-making. When you encounter errors in your data, determine why they happened and find a solution. Make sure you understand your business problem, and what data is relevant to your problem. That will help you know what the best course of action is.

Keep in mind that determining the errors are and figuring out how to fix them takes the longest amount of time in the data cleaning process, but once you have a plan, carrying it out is easy.

Why is it important to document your work as you clean data?

It's important to showcase each step of your data cleaning process in case you realize that one of your steps made little sense. Using your documentation, you can quickly address the problem.

I always tell my students: Make sure you document clearly every step you take. Your data cleaning documentation should answer the following:

You can write down your data cleaning documentation in a Jupyter Notebook or Python script.

Many tools can facilitate the data cleaning process, so the data professional will need to decide which tool will work best for which data set cleaning project.

For small sets of data that do not require a data professional, Excel and Google Sheets can handle simple data cleaning.

Many data scientists and data analysts use Python or R as their main data tool. When using Python, I recommend the following plugins:

Other plugins might have tools and functions to make it easier, but Pyspark, NumPy, and Pandas are the main tools that hold and visualize the data for you.

For someone who isn't technical but wants to use robust software for data cleaning, try Open Refine. Open Refine is a free tool that is straightforward and intuitive, and allows the user to easily upload CSV files. Those who know how to use Python would not use Open Refine, but it is a tool many use to learn data cleaning from scratch.

Some larger companies may have customized, dedicated software for cleaning and validating data sets.

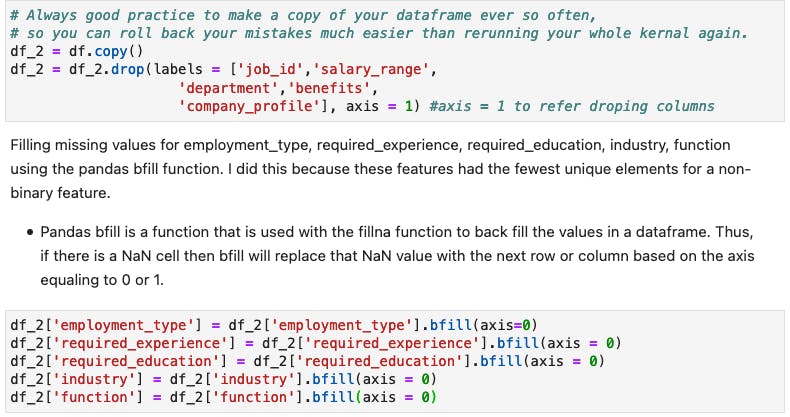

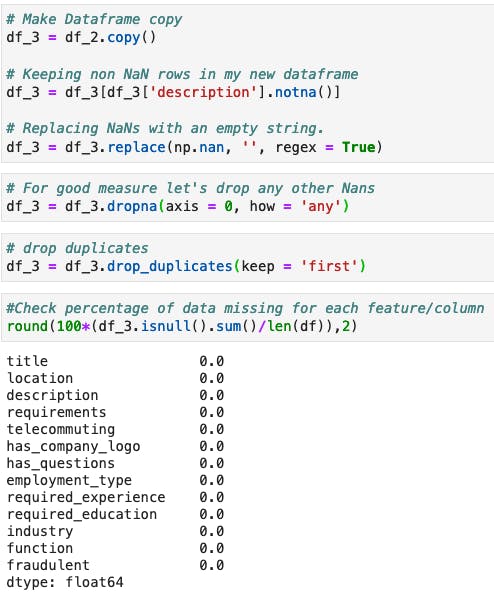

Using Python for data cleaning makes the data cleaning process really efficient. To use Python for data cleaning, you need to know the basics of Python, how to create functions, and how data is stored. Data is stored in a tabular format with rows and columns, and often data is stored in the cloud or as a CSV, Google Sheets, or Excel file. As a data professional, you will be importing that data through Python's Pandas plugin so you can easily visualize the cells, rows, and columns.

To clean data with Python, you can use Python functions or create your own Python functions. The good news is that it’s relatively easy to create your own customized functions! To create a function, you need to base it on what the data represents and what the data is used for.

For example: Let’s say your sales data set has one column based on sales regions followed by a numerical column that has many missing values. You can fill those missing values with the mean or median by yourself or you could create a Python function. If you were looking to only fill the missing values from the Northeast sales region, you could customize your Python function so it checks the numerical row against the sales region column to then fill in the missing value with the average from the data of the Northeast sales region.

What is great about using Python's custom functions is that you can define the function once, and then run it through your whole data set, and depending on your function, it will automatically update and return with a cleaner output.

1. A Python function can be used to check missing data:

2. You can then use a Python function to drop-fill that missing data:

3. You can quickly replace or update values in your data with a Python function:

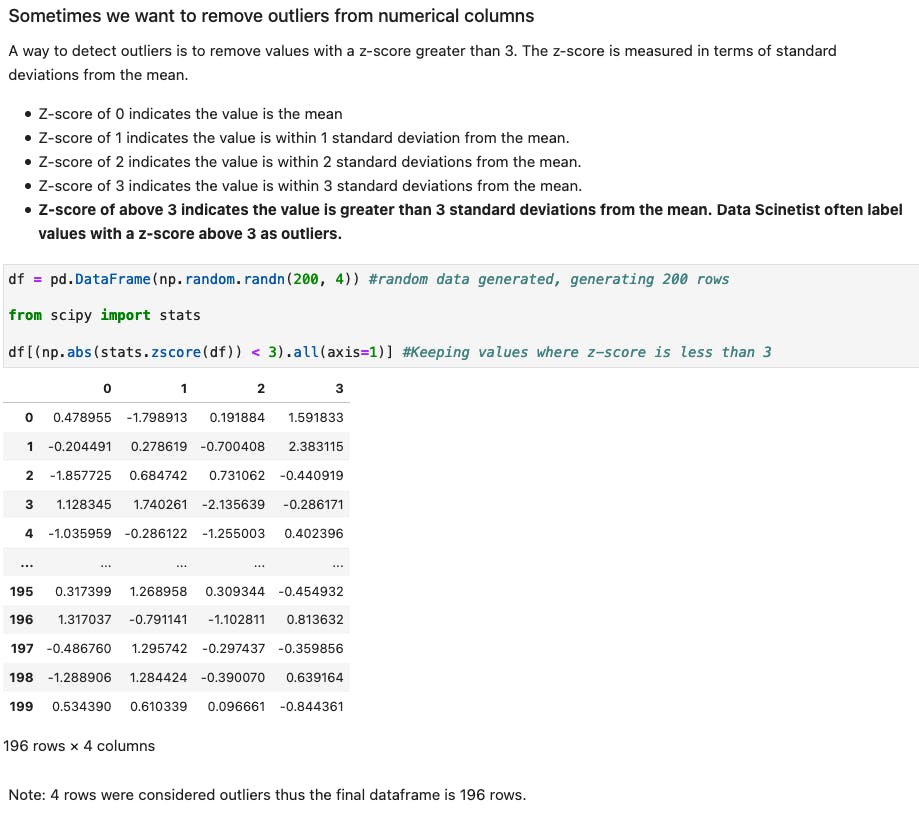

4. Python functions can also help you detect and remove outliers:

At Lighthouse Labs, we know how important data cleaning is, so data cleaning is introduced early on in our data science bootcamp curriculum. Throughout the program, we give students a variety of business problems and use cases. The data sets we provide them are usually messy, chaotic, or incomplete, and before the students can solve the problem, they have to go through all of the data cleaning steps. We reinforce this every time we introduce a new machine learning model or problem to solve. This way, when students graduate from the bootcamp, data cleaning becomes an intuitive step in their data workflow.

Should incoming data science students already know how to clean data?

You do not need to know how to clean data before enrolling at Lighthouse Labs. We cover data cleaning in-depth in both the part-time and full-time data science bootcamp.

To practice data cleaning, I recommend people find messy data sets and try to clean them! You can find datasets on Kaggle, or you can locate census data, which is a challenge to clean and prep because usually in Excel files. Any data analyst or data scientist would say that working with census data is good practice!

I also recommend that you learn regular expressions, which is a type of syntaxing and code that you can apply in most languages that use operators and wildcards to detect patterns in your data set. From there, you can clean that based on the patterns you identify in your data set. Perfecting this will speed up your data cleaning process because essentially it's detecting patterns and applying it.

Find out more and read Lighthouse Labs reviews on Course Report. This article was produced by the Course Report team in partnership with Lighthouse Labs.

Jess Feldman, Content Manager at Course Report

Jess Feldman is an accomplished writer and the Content Manager at Course Report, the leading platform for career changers who are exploring coding bootcamps. With a background in writing, teaching, and social media management, Jess plays a pivotal role in helping Course Report readers make informed decisions about their educational journey.

Enter your email to join our newsletter community.