Article

What is Natural Language Processing?

![]()

Written By Imogen Crispe

![]()

Written By Imogen Crispe

Course Report strives to create the most trust-worthy content about coding bootcamps. Read more about Course Report’s Editorial Policy and How We Make Money.

Course Report strives to create the most trust-worthy content about coding bootcamps. Read more about Course Report’s Editorial Policy and How We Make Money.

Data Scientists need a whole toolbox of skills to be able to analyze and manipulate data on the job. One of those skills is Natural Language Processing (NLP), which helps machines understand and classify human speech and writing. To learn more, we asked Data Scientist Lauren Washington, who also mentors Thinkful data science students, to explain what NLP actually is, how companies like Google and Facebook use it, and why it’s a useful skill for data scientists. Plus find resources to help you get started with Natural Language Processing!

NLP is basically feature engineering. This isn't a machine learning algorithm. It’s a way of taking natural text and turning it into something that an algorithm can use. As a data scientist, 80% of your job is being a data janitor and trying to clean things up and turn them into data and features that can be worked with. So natural language processing is a way to process that textual data and turn it into numerical values or categorical values that you can use to actually model text.

A good example is a spam filter for email. A machine can assume that a message is spam or unimportant message based on the frequency count derived from bodies of text.

Virtual Assistants: I use my virtual assistant to play Spotify, but if I work my commands differently, it reacts differently – that’s natural language processing. It’s using my words to try to analyze what I'm asking it to do. When you put keywords in your request, it will pick those up and throw out the rest, which is how natural language processing works.

Chatbots: Chatbots use natural language processing to map against a database to say, "Okay, usually when somebody asks a question like this, this is the type of answer that they want."

Recommendation engines: This is software that analyzes data to make suggestions to a user based on their interests or browsing habits. Once you start learning more about natural language processing and machine learning, it will be really funny to go to different websites and think, "Oh, they probably just have this one algorithm underneath that's looking at what I'm typing, and that's why this website is able to recommend these things to me."

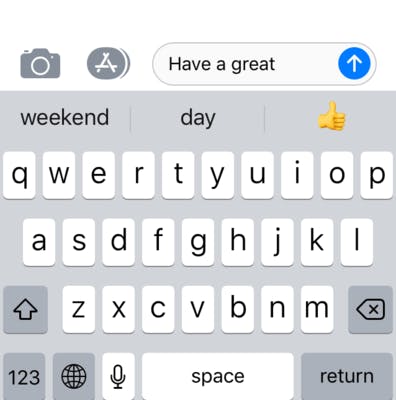

Natural Language Processing can also do things like sentiment analysis to see the polarity of something – if something someone is saying is really positive, negative, or neutral. Another example is predictive text, which we see on our cell phones every day.

As long as you know how to do supervised and unsupervised learning, natural language processing should be pretty straightforward.

In a supervised learning model, you already have something that's defined eg. important versus spam in your email inbox. If you create a dataset with important and spam messages, then you can determine the words that are associated with important messages and spam messages, so that when a new email comes in, you can find the similarity between that and the previous emails. A huge part of natural language processing is calculating the similarity between different words/datasets.

Another option is to use an unsupervised learning model and do something like topic modeling. Take a textbook, for example. It has a table of contents, which gives a good indication of what the topics are modeled as. When you see the results of topic modeling on the textbook, you'll see that it pretty much puts them in the same topics that you saw in the table of contents, because of so many similar words in this particular document. For all NLP you usually you take a corpus (every document that's contained), plus whatever you want to analyze, and then you try to find the similarities between different subjects in that.

Another method of NLP is tokenization of texts. Let's say that we have the words "natural language processing is." If we do an n-gram, the first column would be the word "natural", the second column would be the word "language", the third one the word "processing". If we did a bi-gram, the first column would be "natural-language", the second "language-processing", the third, "processing-is" and so forth. So it's the way you would turn the sentence into something that counts the frequency of how many times those different terms (or bigrams, or trigrams) or chunks of those terms are actually occurring.

When you’re just starting out, you’ll use supervised and unsupervised learning. But when you want to get a little bit deeper, you can use something like a neural network, and use recurrent neural networks to be able to do predictive text, or use convolutional neural networks to be able to figure out the context of the text.

Python and R are the most common.

In Python, you can use the Natural Language Toolkit for a lot of things: tokenization, part-of-speech tagging, etc. Beautiful Soup, Spacy and Gensim are great to use for summarizing text, like news articles, or for a bibliography.

In R, there is OpenNLP, RTextTools, and Tokenizers. qDAP, or quantitative discourse analysis is a helpful module or library that you can use as well. You can use Keras or TensorFlow to do things with recurrent neural networks and convolutional neural networks as well.

Google uses NLP for their search engine. Once you start using natural language processing, you get a lot more efficient at Googling because you start thinking about the keywords that are most important to their algorithm to get the best results.

Facebook and other companies use NLP for their chatbots. If you forgot something in your cart, or if you have a question to ask a business, they have a bot that will immediately answer questions.

At customer service companies, you can use NLP to prioritize tickets by sentiment. Does this person sound really negative? Do they sound happy? Are they using positive keywords? Negative keywords? You can do a summarization of their tickets.

Natural Language Processing is really helpful in all contexts because most companies have free text data that they don't necessarily know what to do with yet.

I've noticed that a lot of companies are actually looking for that natural language processing skillset now. A lot of companies have probably had humans spending hours reading through their free text data to try to gauge what's going on. And now we can do it in a more efficient way with data scientists who do natural language processing. That could be for automating a customer service queue and putting the most egregious cases at the top, or for analyzing customers and whether or not they like your products.

NLP is definitely a new skillset that's in-demand, but it requires a lot of creativity. There are a lot of modules that are built on plain text, but you must be able to build on top of that, build it out for your particular use case, and be familiar with your business domain.

We offer NLP as a specialization at Thinkful and I get really excited when people choose it. But sometimes I hear people say, "I don't want anything to do with NLP," thinking that it’s going to be really hard. If you really like creativity, you like things that are constantly changing, and still require a lot of research and whitepapers, then natural language processing is a great skill set to have.

Thinkful data science students have a few opportunities to be exposed to natural language processing in our curriculum:

Lauren's advice: Don’t be scared to get into NLP. At my first data science job, I wasn't tasked to work on natural language processing – I was doing a lot of experimentation and A/B testing. I knew that I wanted to get into NLP, so I started my own side projects, started going to marketing teams, asking to analyze their headlines, and things like that. Explore it, keep working at it, and look at different resources online where people actually show you how they work through a problem.

Remember: NLP is just a way of doing feature engineering. If you know how to do machine learning, you can pick up natural language processing, so please don't be discouraged.

Find out more and read Thinkful reviews on Course Report. Check out the Thinkful data science page.

Imogen Crispe, Content Creator and Entrepreneur

Imogen is a writer and content producer who loves exploring technology and education in her work. Her strong background in journalism, writing for newspapers and news websites, makes her a contributor with professionalism and integrity.

Enter your email to join our newsletter community.